The more sophisticated the models become, the greater the need to collect, combine, and interpret personal information—often from multiple sources that individuals may not even be aware of.

This expanded data processing enables the identification of behaviors, preferences, and relationships, creating detailed profiles that go beyond isolated data points and reveal sensitive aspects of everyday life, even when the original data appears harmless.

Recent discussions in Europe have addressed potential adjustments to data protection frameworks and tracking technologies, sparking debate among privacy advocates. While regulators argue that such changes aim to simplify compliance and foster innovation, critics highlight the risk of weakening personal data protections. The core issue revolves around balancing technological advancement with the safeguarding of fundamental rights.

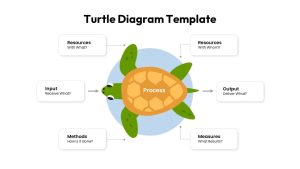

From an operational perspective, AI changes the traditional logic of data protection. It does not simply store data—it interprets it and extracts new insights. When data circulates across systems, algorithms, and third parties, exposure increases and control becomes more complex.

Additionally, AI systems can infer new information from legitimate data. This challenges key data protection principles such as purpose limitation and data minimization, since outcomes are not always predictable at the time of data collection.

From an institutional standpoint, privacy risks also stem from governance decisions, transparency gaps, and algorithmic accountability. Organizations that deploy AI without clear policies on explainability, data retention, and control are more exposed to regulatory and reputational risks.

Ultimately, the discussion about privacy requires conscious decisions about how, why, and to what extent data should be used.

Why Is Data Privacy Particularly Sensitive in AI?

Data privacy becomes especially sensitive in AI because data is not treated as static. Its value comes from being correlated, reprocessed, and reinterpreted over time.

Even seemingly neutral datasets can reveal deeper insights when analyzed by models capable of identifying patterns and reconstructing behaviors. This allows systems to anticipate individual decisions, expanding the impact on personal privacy.

AI operates on probabilistic logic, transforming data into inferences. Based on legitimate records, systems may deduce information that was never explicitly provided, such as behavioral patterns, socioeconomic conditions, or consumption habits.

In this context, the sensitivity lies not only in the data itself but in the outcomes generated by its processing. Exposure occurs not through excessive data collection alone, but through the analytical capabilities of algorithms.

Another critical factor is the asymmetry of power between those who develop or operate AI systems and those whose data is processed. The opacity of models, combined with the scale and speed of processing, reduces individuals’ ability to understand and control how their data is used.

Protecting privacy in AI therefore requires responsible decisions regarding data governance, usage limits, and accountability.

What Is the Biggest Ethical Challenge in AI Today?

The greatest ethical challenge of AI lies in the concentration of decision-making power within opaque systems operating at scale.

AI already influences areas such as credit approval, recruitment, pricing, content moderation, and service prioritization—often without transparency regarding how decisions are made.

When algorithmic logic is not accessible, the ability to question, correct, or assign responsibility becomes limited. This creates a significant ethical imbalance between technical efficiency and social fairness.

This challenge is amplified because AI models learn from historical data, which may contain biases, inequalities, and systemic distortions. As a result, AI can reproduce—and sometimes amplify—these biases under the appearance of neutrality.

Another concern is that the rapid adoption of AI outpaces the development of governance, oversight, and accountability mechanisms. Organizations may delegate sensitive decisions to systems that were not designed to evaluate human or social impact.

The ethical challenge, therefore, is to define clear boundaries: where AI should act autonomously, where it should support human judgment, and how to respond when outcomes exceed acceptable limits.

Conclusion

In this context, understanding how AI affects privacy has become a key factor in institutional decision-making.

The balance between innovation and protection will depend on establishing clear standards for responsibility, transparency, and limits of use before technology advances further.

Ultimately, the maturity of AI will not be measured solely by the sophistication of its models, but by the ability of organizations and public institutions to guide its use in alignment with fundamental rights and societal expectations.